Owlbear Rodeo 2.0 Dev Log 4

Exploring the plans for the new Owlbear Rodeo 2.0 back-end architecture

Hi all!

It’s Nicola here with the dev log this month, so the update probably won’t be a visually exciting as it usually is.

For those of you that don’t know, our front-end (the user interface) is handled by Mitch, while I take care of the the back-end (the server). I’m going to do my best to keep the technical jargon to a minimum.

Owlbear Rodeo 2.0 gives me an opportunity to address all the issues with the current way that our servers run. At the moment, it’s impossible to perform any sort of update without disrupting the client connection and we’re extremely over-provisioned in order to handle any sort of load increase. So essentially what I wanted to achieve when I started thinking about the new architecture for Owlbear Rodeo 2.0 was three things:

- Zero-downtime deployments

- Stress-free scaling

- Easy to manage

Owlbear Rodeo 1.0

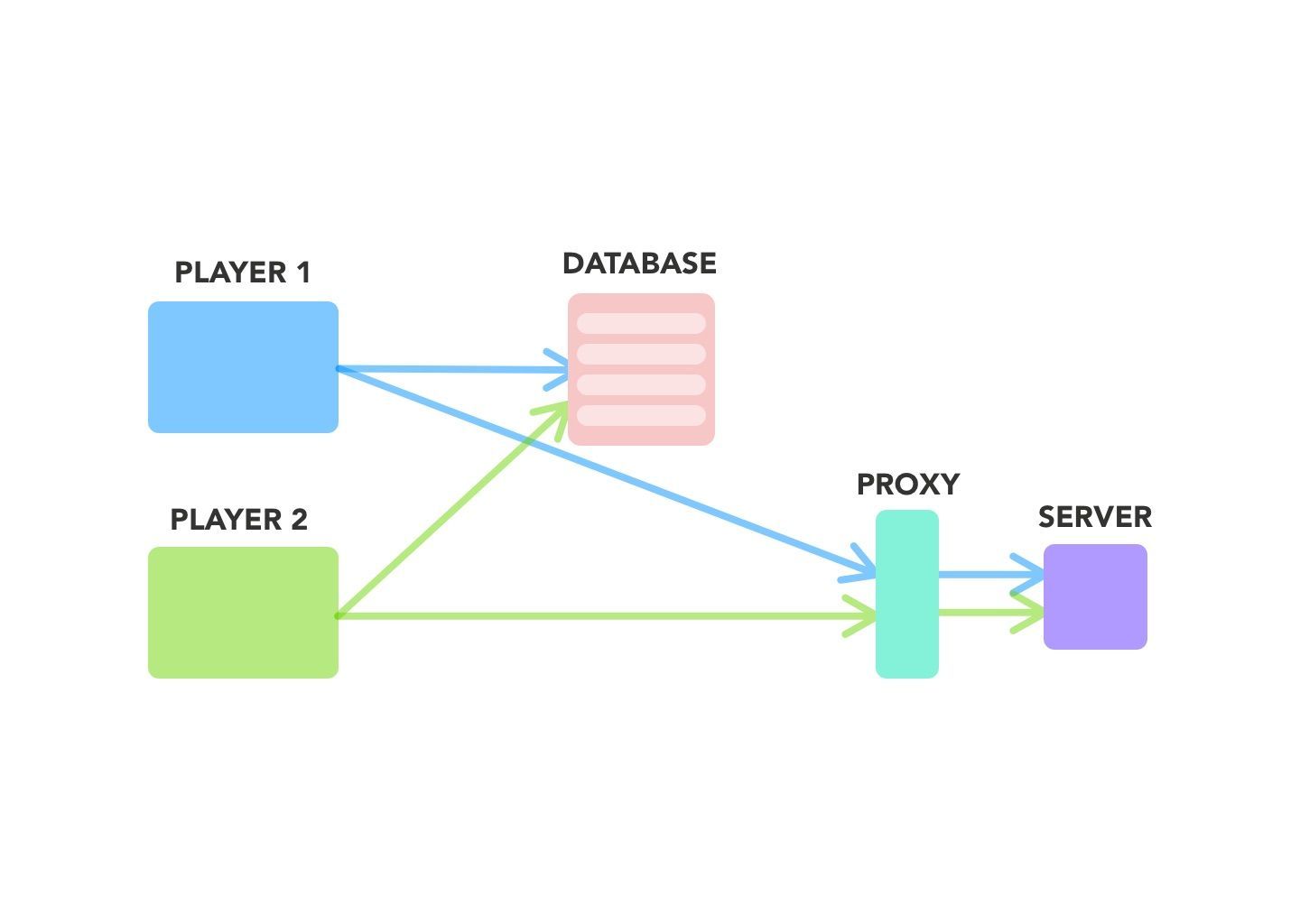

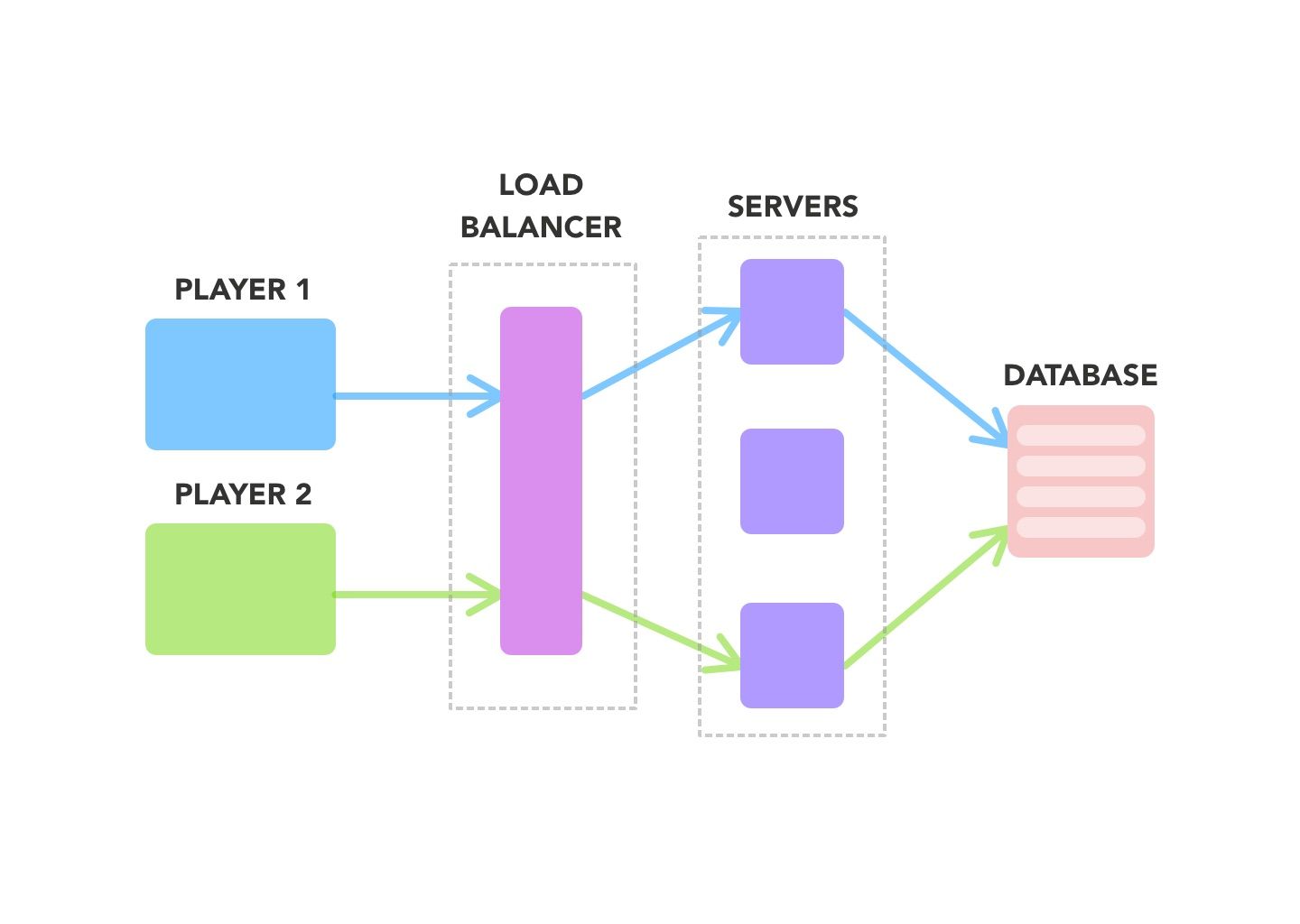

The current state of Owlbear Rodeo back-end:

The diagram above displays how players will currently connect to a room. Essentially, they are routed via a load balancer to any of the available servers, they are then made aware of another players changes via the database. In this case, when you join a game in Owlbear Rodeo, someone else in your party could be on a different server than you.

The Problems

This works fine, however these connections are WebSocket connections, making them long-lived. Most requests you make to a server are (usually) short, you make a request, get a reply, and you’re done. When you have a longer connection, you can end up in the scenario where some servers have more players than other servers, just because that’s what the load balancer has dictated. There are ways to mitigate this on the load balancer, but this couldn’t be supported on the technology we are using.

WebSocket connections are also very difficult to scale down, as destroying a server means interrupting players connections on a game. Creating custom scale down logic was not something I wanted to manage, and tools I found to handle this were not adequate. Now we’re stuck in this very traditional server management scenario of keeping servers up even though we don’t need them.

It also this exact reason that makes it difficult to update the server without downtime.

The Plan for Owlbear Rodeo 2.0

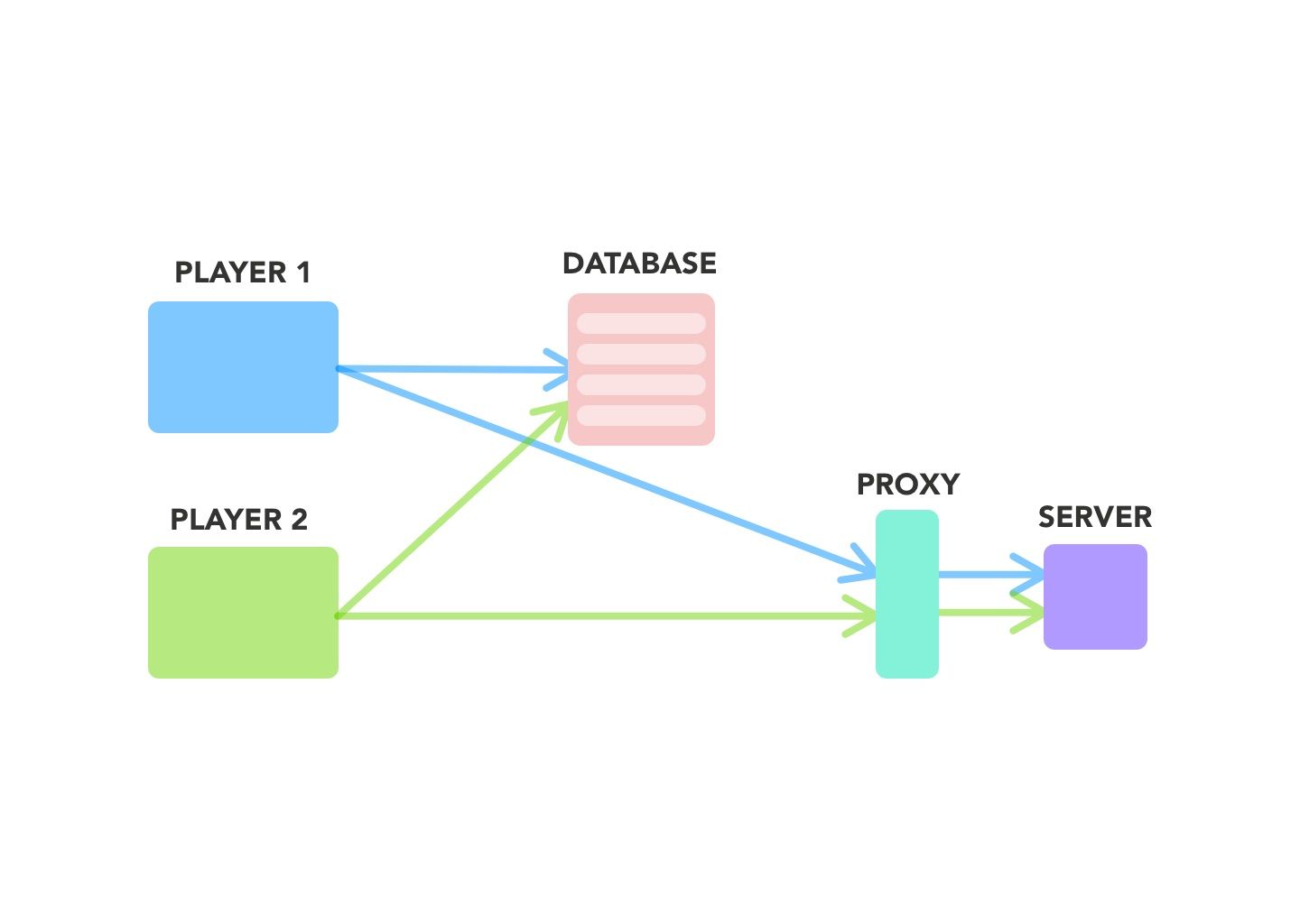

In Owlbear Rodeo 2.0, I’ve created more of a “sandbox” where every game is isolated to its own server.

As you can see in the diagram, when a client tries to connect to a room a request is made to the database to see if that game already exists. If it does, the connection details are retrieved and the player is routed to that server via a proxy and connected to the rest of their party.

In the instance that that a room doesn’t exist (when you create the game for the first time), then an additional request is made to an internal API to create a new room (aka the server you’ll connect to) and then connect. This isn’t shown in the diagram above for simplicity.

This is nice, because it means that if the server crashes it only affects one game, rather than a small amount of people on multiple games being interrupted. This also helps track of errors, as I only have to look into one game, rather than trying to find whodunit and then investigating from there. It also helps with load balancing, as servers can be created and destroyed as games are started and finished. Now we don’t have to worry about over-provisioning the amount of servers we have, we have exactly what we need.

In order to be able to manage this myself, I’ve let AWS take the wheel with ✨ s e r v e r l e s s ✨

While this offers more flexibility with scaling and zero downtime deployments, this does have the downside of the creator of the room having to wait for it to be created. We haven’t performed extensive testing but it takes around 10 seconds for the server to be created and maybe an additional 5 seconds for the server to accept the connection. However, the benefits outweigh the wait time that one person has to wait while they essentially create their own private server. We’re thinking about putting a cute animation in there while you wait 🙂

I could have essentially kept what we already had in Owlbear Rodeo 1.0 with some tweaking but my primary concern was being able to manage this myself while addressing the issues that we currently had.

If you reached the end of this post, thanks for sticking around! It’s taken a bunch of time with experimentation on my end to see if this was achievable for us, and even now I’m remaining open to other avenues of supporting our backend.

Coming up

We have some some big changes coming for Kenku FM. They should be coming out sometime this week.

At the moment Mitch is working on the new Attachments and Text workflow for the Owlbear Rodeo 2.0 user interface, and should have more to show next month.

Happy Gaming!